Facial recognition is increasingly embedded in everything from airport boarding gates to bank onboarding flows. The widely-held assumption is that a face is hard to fake and that matching a live face to a trusted source is a reliable identity signal.

Jake Moore, ESET Global Cybersecurity Advisor, recently put this assumption through several practical stress tests. His experiments showed that the powerful technology can actually be both misused and defeated.

In one test, Jake used a pair of modified off-the-shelf smart glasses that can identify people in real time. He walked through a public space, captured people’s faces and compared them against publicly available online data sources, with identity matches returned within seconds. The names and social media profiles were pulled together only from nothing more than people’s glances.

This ability might come in handy if, say, a conference attendee struggles to remember people's names, but it’s far less palatable when you consider what someone with ill intentions could do with that information.

The second demo had a different spin. It went after financial services, turning a fraud prevention system against itself. Using AI-generated images and freely available software, Jake created a fictitious face to open an actual bank account. The bank's facial recognition and eKYC (know your customer) platform accepted it as a genuine person.

After proving the point, Jake closed the account and shared all information with the bank, which has since shut down that specific method of identity abuse. But one broader question remains: how many financial institutions may still be susceptible to this kind of attack?

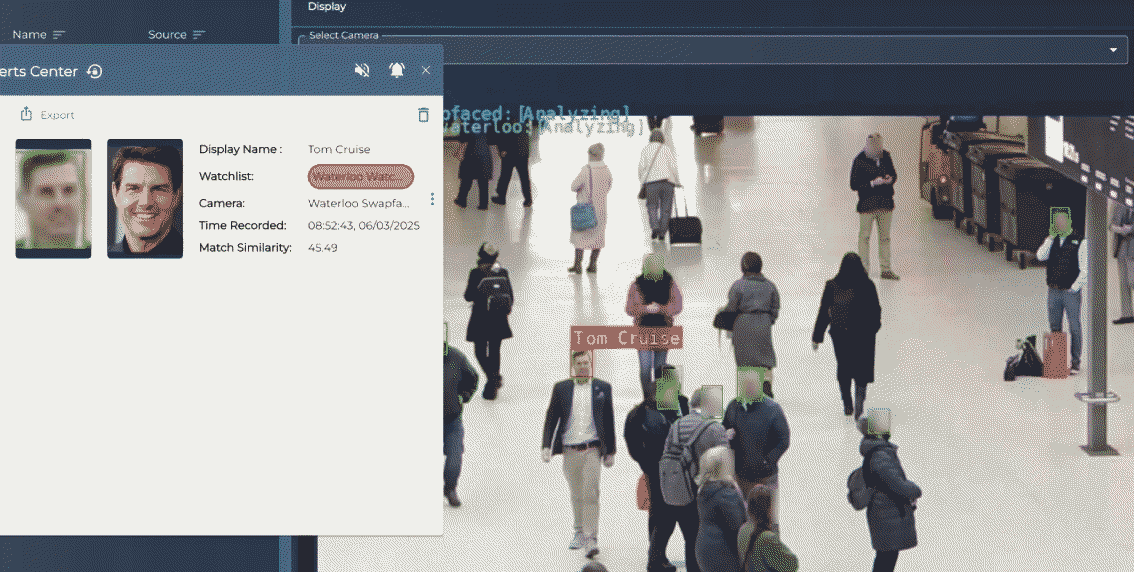

Lastly, Jake added himself to a facial recognition watchlist at a busy train station in London. He then walked through the monitored area while running real-time face swap software that overlaid Tom Cruise’s likeness onto Jake’s own in the camera feed. The system, which is also used by the UK police, never recognized or flagged him. It was as if he simply wasn't there and anyone actively searching for him on CCTV would have seen the actor instead.

There's a lot more to these experiments than we can cover here – they’re all part of Jake’s talk at RSAC 2026, which is due in San Francisco from March 23rd-26th, 2026. If you're at the conference, consider attending the talk – after all, seeing this all work against an in-production system in a live environment is different from ‘just’ reading about it. To learn more, including about other ESET talks at the conference, visit this website.

The big picture

Facial recognition systems are being deployed with implicit trust that doesn't match their actual resilience when someone tries to break them – even where they only use off-the-shelf consumer hardware and easily available software, just like Jake did. Identity verification that is solely dependent on a face match clearly carries more risk than most people and organizations realize.

The experiments also send a message to vendors of facial recognition systems and anyone responsible for identity verification systems. Among other things, the systems should be tested in attack simulation settings and under other adversarial conditions. The technology behind facial recognition is fragile in ways that matter when someone attempts to subvert it.